Documentation Index

Fetch the complete documentation index at: https://docs.scrapegraphai.com/llms.txt

Use this file to discover all available pages before exploring further.

Overview

The officialn8n-nodes-scrapegraphai community node exposes the full v2 API as a single node with seven resources: Scrape, Extract, Search, Crawl, Monitor, History, and Credit. Drop it into any n8n workflow, point it at a URL, and you get markdown, structured JSON, screenshots, or a recurring monitor — wired into the rest of your stack via the 400+ nodes n8n already ships with.

Package on npm

n8n-nodes-scrapegraphaiSource on GitHub

Issues, PRs, and the changelog

Installation

Inside your n8n instance, open Settings → Community Nodes → Install and enter:Self-hosted n8n only — n8n Cloud does not yet allow community nodes. If you don’t have a host, follow the self-hosting guide.

Credentials

Add a new ScrapeGraphAI API credential and paste your API key. n8n will hitGET /api/credits to verify the key — a green banner confirms it works.

Get your API key from the ScrapeGraphAI dashboard.

What’s in the node

| Resource | Operations | What it does |

|---|---|---|

| Scrape | scrape | Fetch a page in markdown, HTML, JSON (AI-extracted), screenshot, links, summary, branding, or any combination |

| Extract | extract | Run a natural-language prompt over a URL, raw HTML, or markdown — optional JSON schema |

| Search | search | AI web search with inline content; optional rollup prompt across results |

| Crawl | start, getStatus, stop, resume, delete | Async multi-page crawls with patterns, depth, and per-page formats |

| Monitor | create, list, get, update, pause, resume, delete, activity | Cron-scheduled fetches with diff detection and webhooks |

| History | get, list | Look up past results by scrapeRefId — used to fetch full content for crawled pages |

| Credit | get | Check remaining credits and plan |

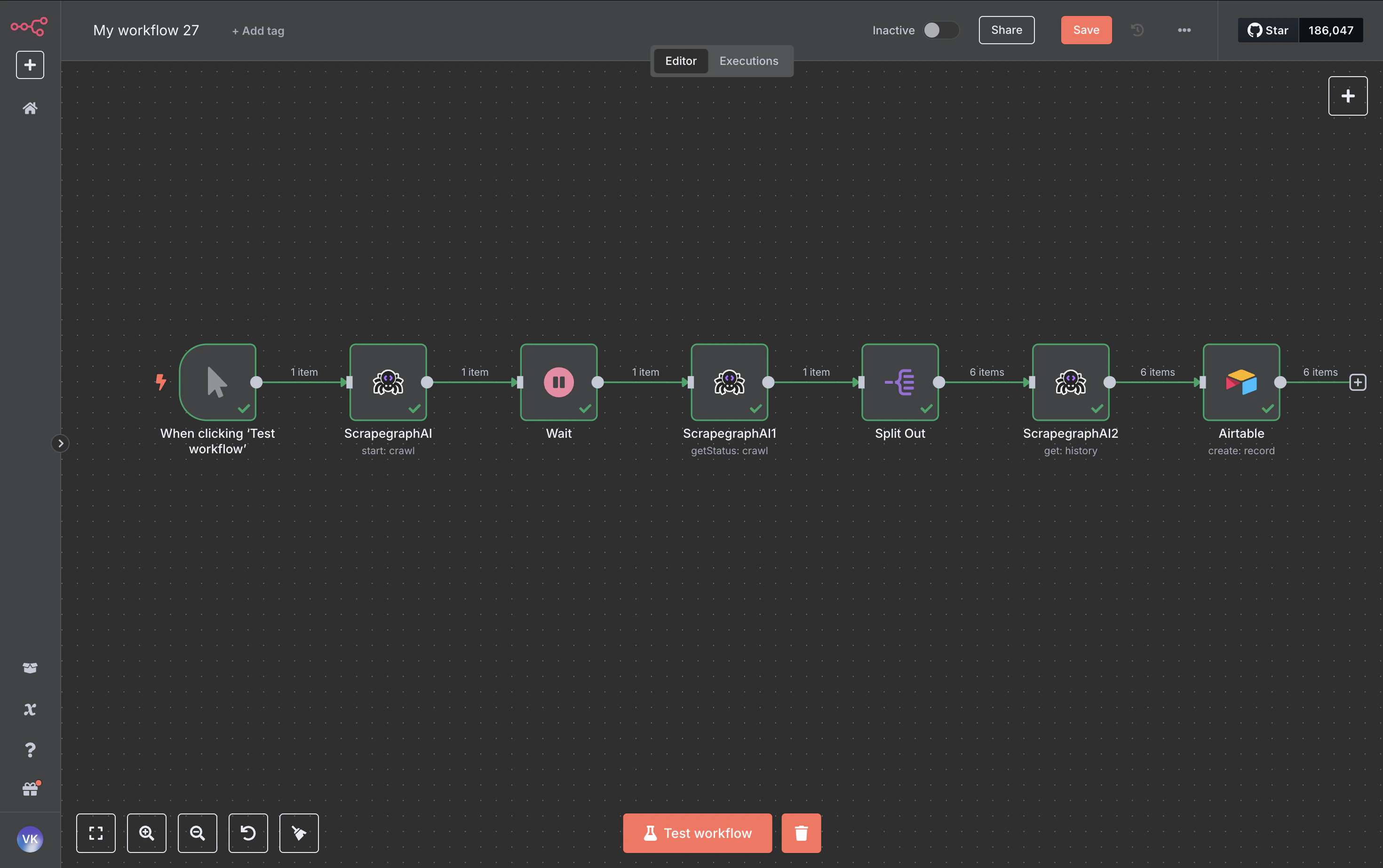

Example: crawl a site, save every page to Airtable

This walkthrough uses Crawl to discover pages, History to fetch each page’s full content, and an Airtable node to land the rows. The same pattern works for Notion, Google Sheets, Postgres, S3 — anywhere n8n can write.

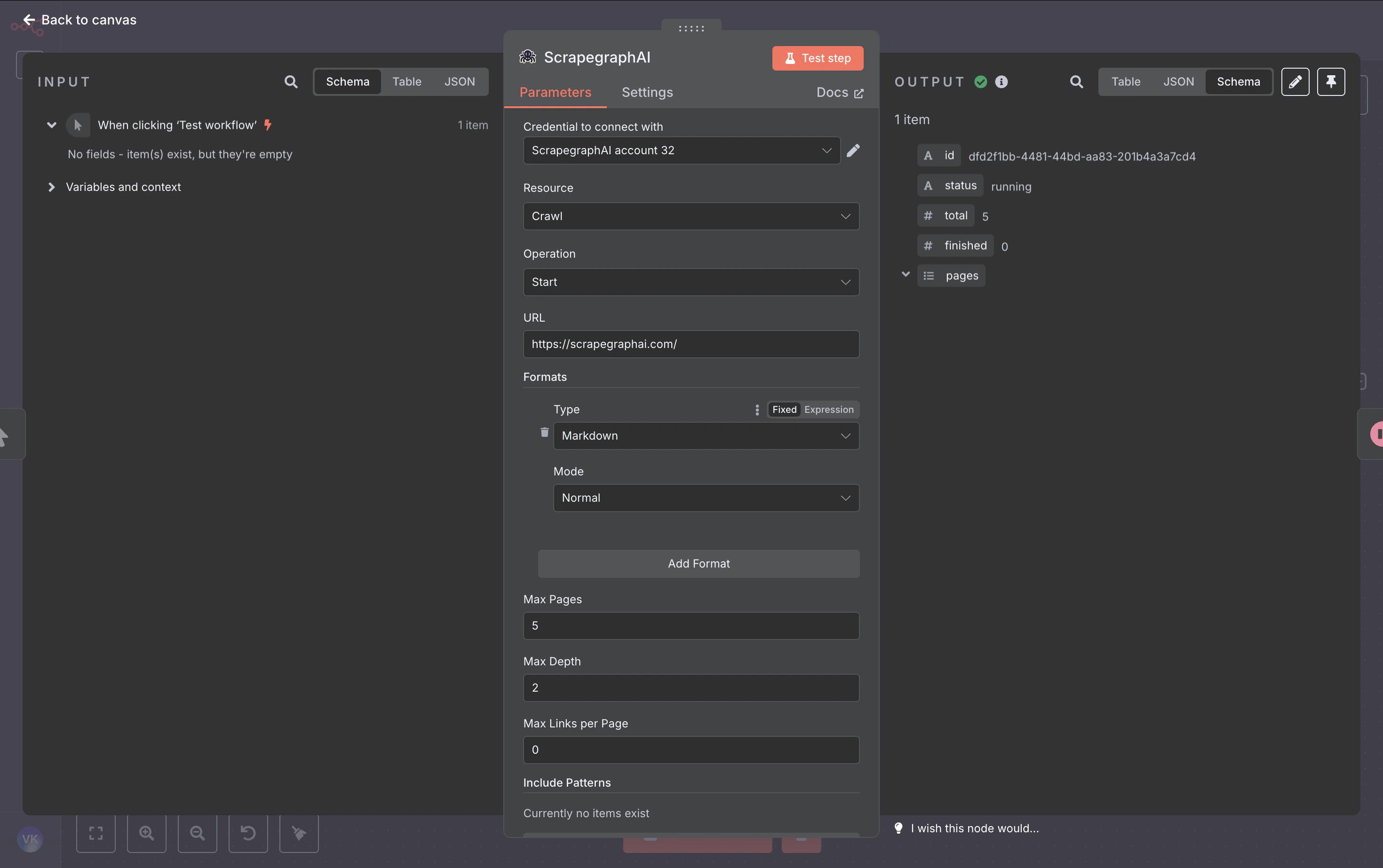

1. Crawl → Start

Kick off the crawl. The node returns acronId (the crawl job ID) which the rest of the workflow chases.

| Field | Value |

|---|---|

| Resource | Crawl |

| Operation | Start |

| URL | https://scrapegraphai.com/ |

| Formats | one entry, Markdown (mode Normal) |

| Max Pages | 6 |

| Max Depth | 2 |

2. Wait

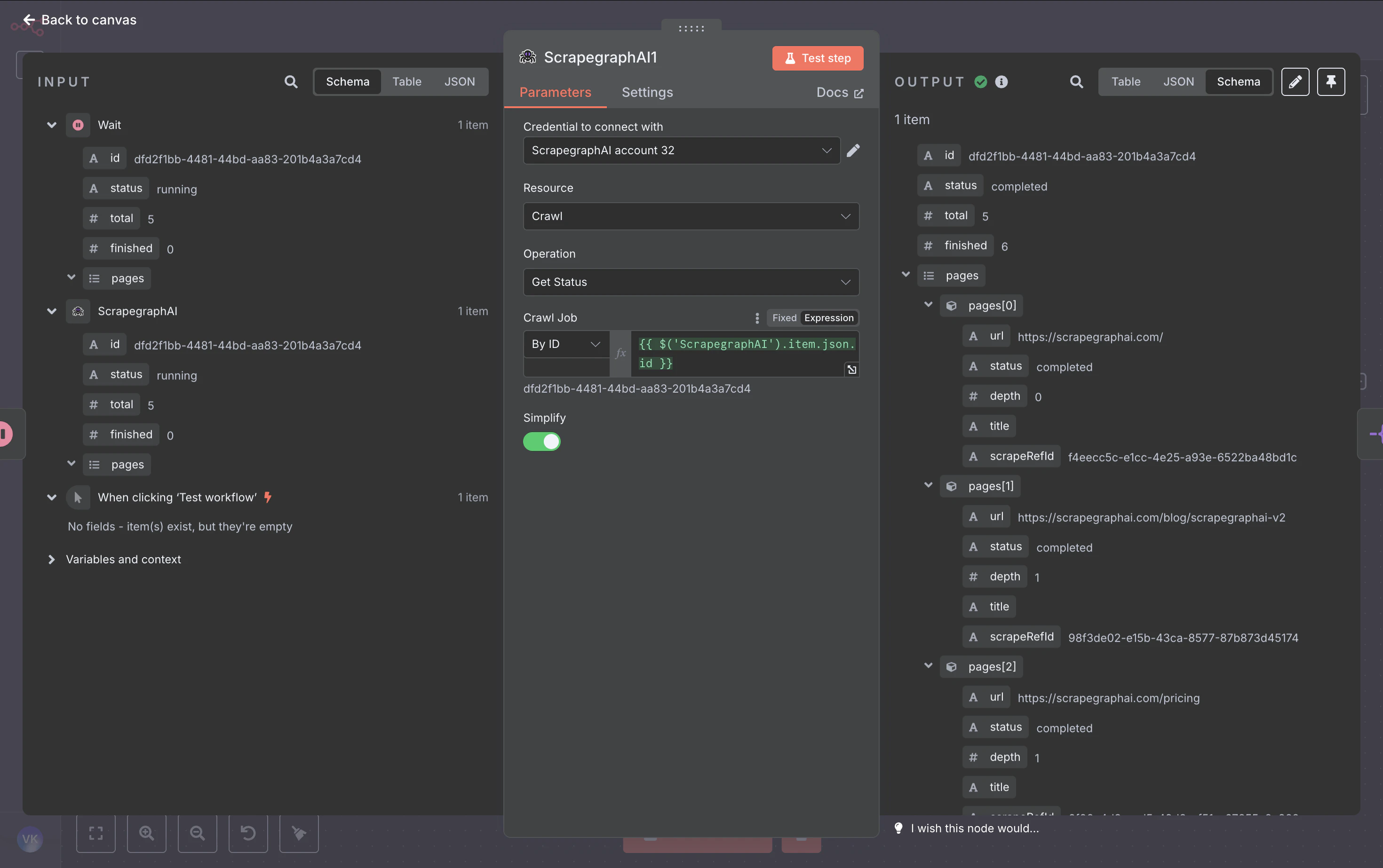

Add a Wait node (~60 seconds). Crawls are asynchronous — give the worker time to fetch a few pages before polling.3. Crawl → Get Status

Pull the job state. Whenstatus is completed (or partial), the response includes a pages array with one entry per crawled page — each carrying the page URL, depth, title, and a scrapeRefId pointer to the stored result.

| Field | Value |

|---|---|

| Resource | Crawl |

| Operation | Get Status |

| Crawl ID | ={{ $('ScrapegraphAI').item.json.id }} (Resource Locator, expression) |

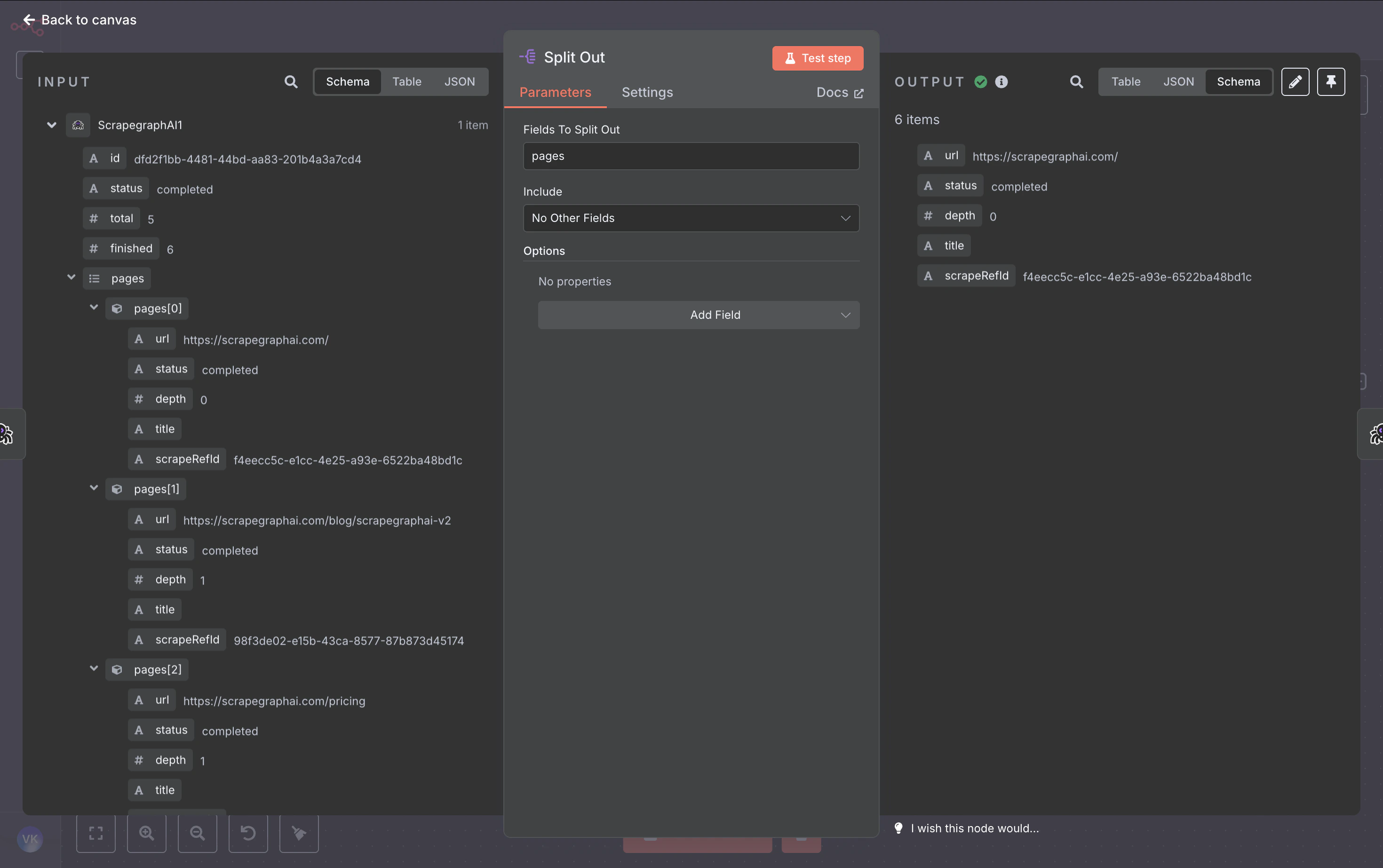

4. Split Out

Split thepages array into one item per page so the next node runs once per crawled URL.

| Field | Value |

|---|---|

| Field To Split Out | pages |

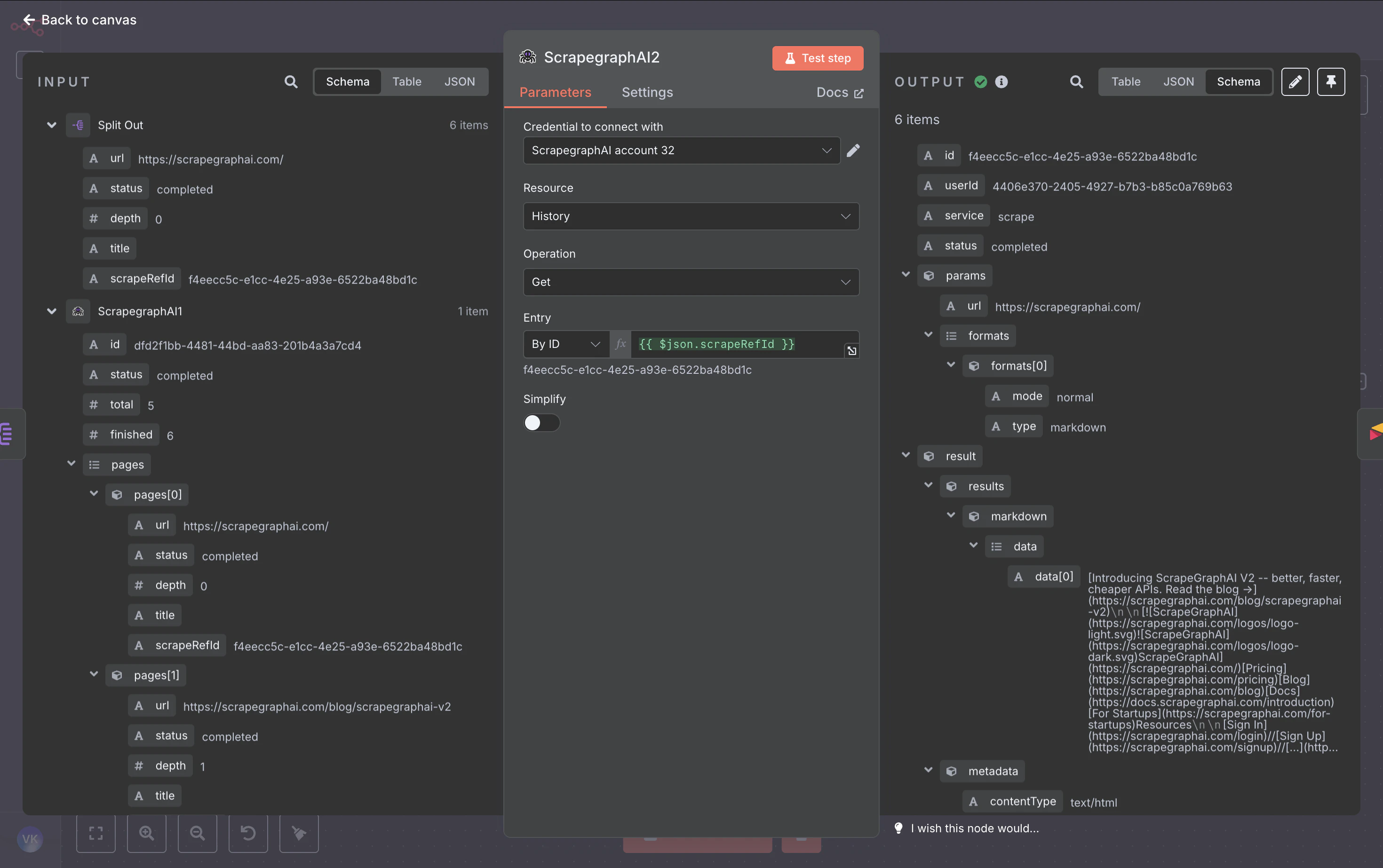

5. History → Get

For each page, fetch the full content (markdown, HTML, JSON — whatever formats the crawl captured) using thescrapeRefId from Split Out.

| Field | Value |

|---|---|

| Resource | History |

| Operation | Get |

| Entry | ={{ $json.scrapeRefId }} (Resource Locator, expression) |

| Simplify | off |

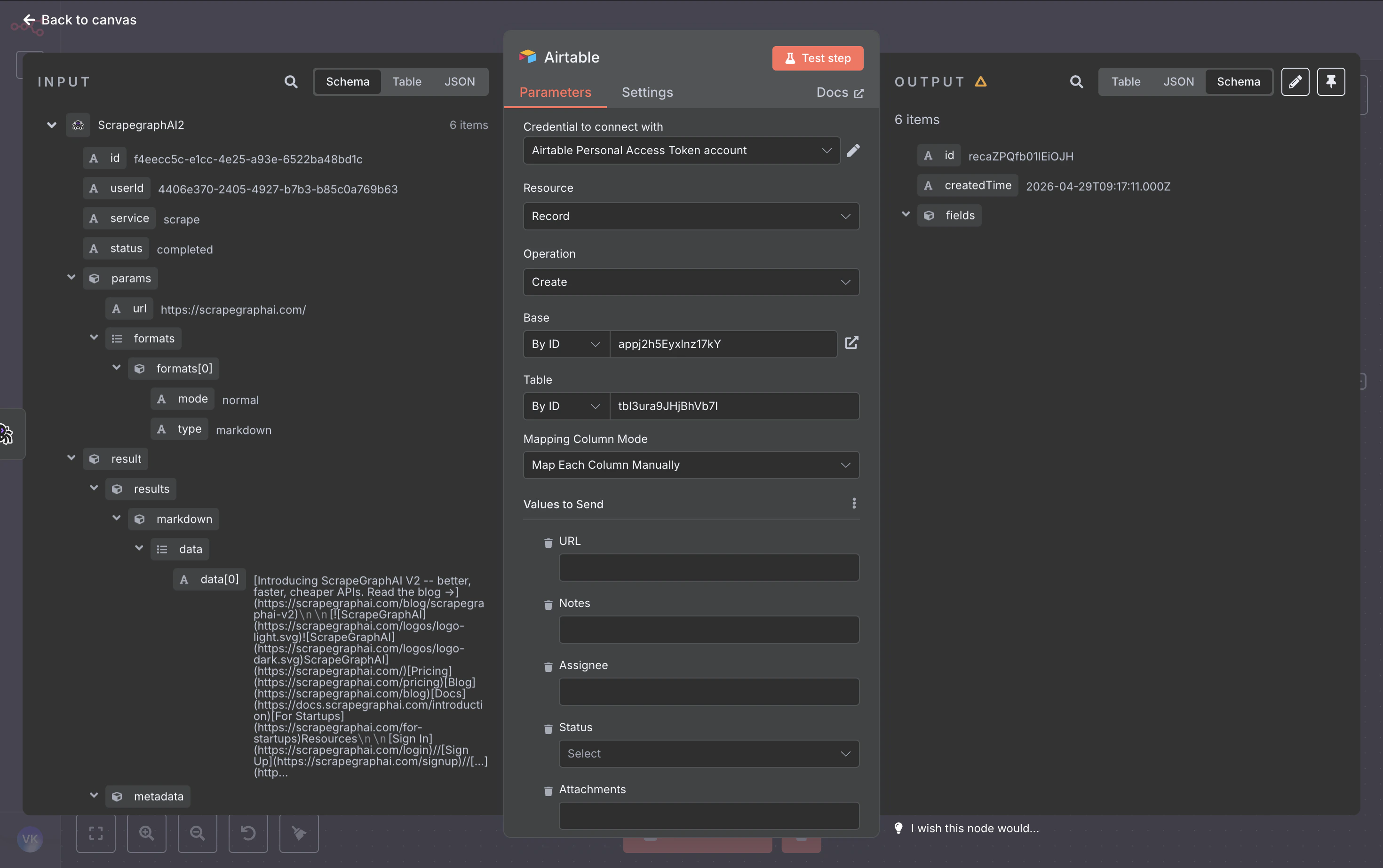

6. Airtable → Create

Map the page metadata + content into a row. Switch the Base and Table dropdowns to By ID mode and paste your IDs, then map fields with expressions:

| Column | Expression |

|---|---|

| URL | ={{ $('Split Out').item.json.url }} |

| Title | ={{ $('Split Out').item.json.title }} |

| Depth | ={{ $('Split Out').item.json.depth }} |

| ContentType | ={{ $json.metadata.contentType }} |

| Markdown | ={{ $json.result.results.markdown.data[0] }} |

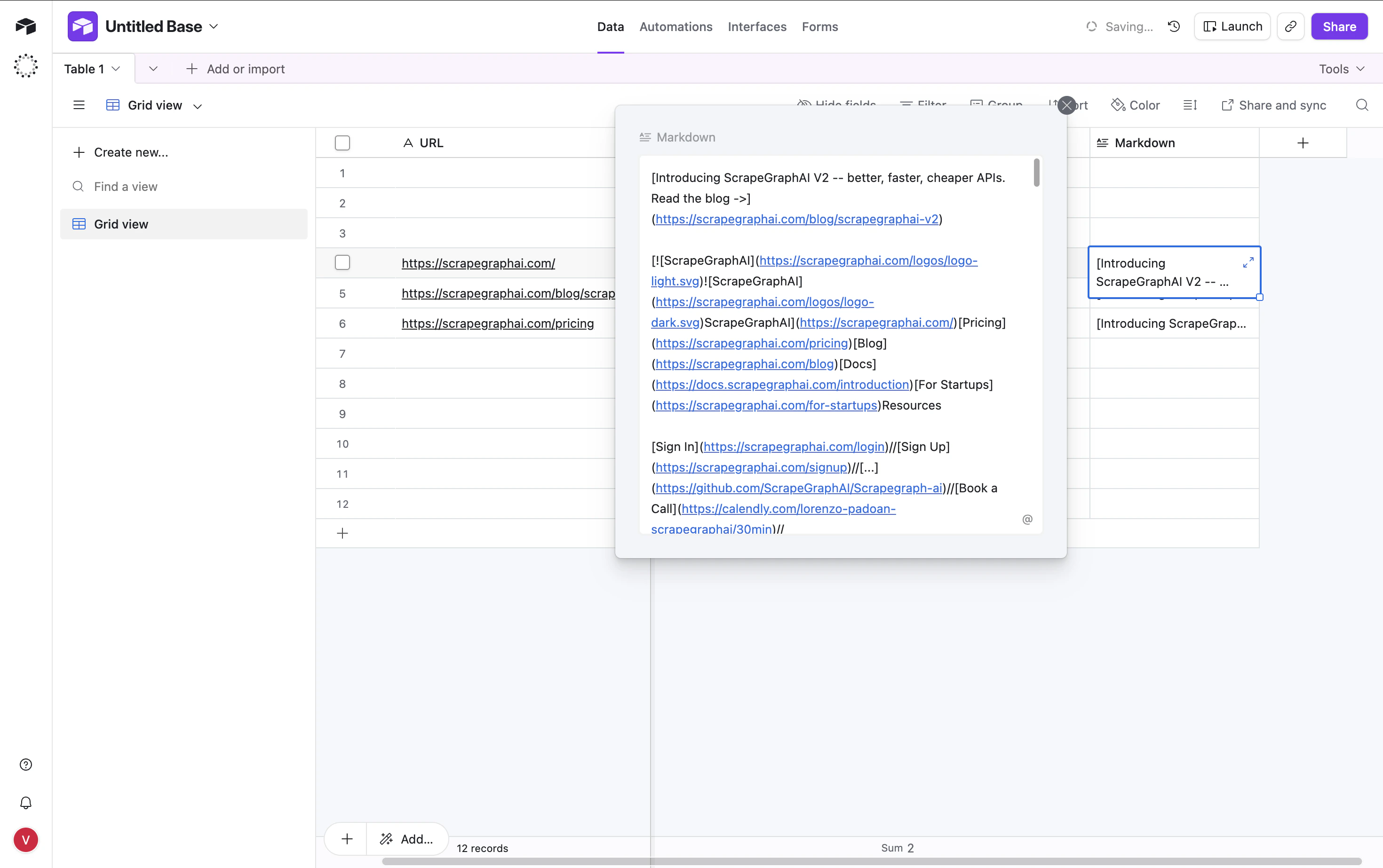

7. Run it

Hit Test workflow. The node fires once per crawled page and writes a row each time:

Output modes for AI Agent tools

When you attach the node as a tool to an n8n AI Agent, the Output parameter on Scrape / Extract / Search becomes load-bearing:- Simplified — flattened response with the most useful top-level fields (

id,json,results,usage, …). Easiest for an LLM to reason over. - Raw — the full v2 API response, untouched.

- Selected Fields — comma-separated allowlist of top-level keys.

Patterns that carry over

| Pattern | Resource(s) | Notes |

|---|---|---|

| One-shot fetch | Scrape | Use formats=[{type:"markdown"}] for the cheapest pass |

| Structured extraction | Extract or Scrape with JSON format | JSON schema is optional but locks the shape |

| Multi-page archive | Crawl + History (this guide) | History → Get is how you retrieve the bytes a crawl captured |

| Recurring fetch with diff | Monitor | Wire the webhookUrl field to an n8n Webhook node for instant deltas |

| AI search rollup | Search with prompt | Single-call alternative to “search → scrape each result → summarize” |

Troubleshooting

Unknown field name: "id"from Airtable — your column names don’t match. Switch the Airtable node’s mapping to Map Each Column Manually and only fill the columns that exist in your table.- Crawl Get Status returns

pages: []— the crawl is still running. Increase the Wait duration or poll untilstatus === "completed". - History Get returns an old result —

scrapeRefIdalways points to the latest result for that pointer. Trigger a fresh crawl to refresh. - Credentials test fails — confirm the key is from the v2 dashboard. The node calls

https://v2-api.scrapegraphai.com/api/credits; v1 keys won’t validate.

Resources

GitHub repo

Source code, issue tracker, and release notes

n8n Community Nodes

How to install and trust community nodes in n8n

API Reference

Full v2 endpoint reference — every parameter the node sends

Dashboard

Get an API key and check usage